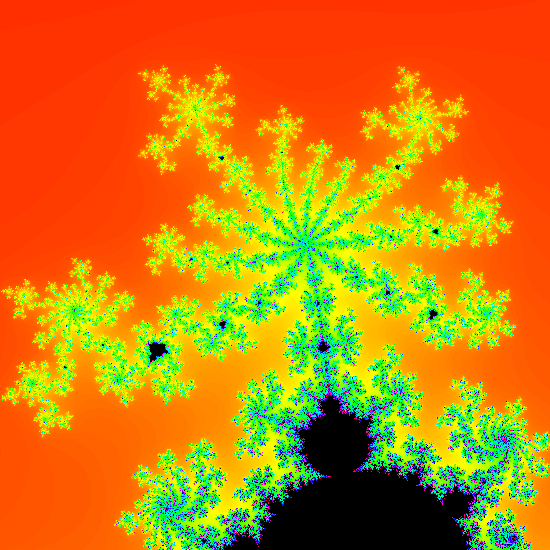

In this series we’re going to build an app to view the Mandelbrot set. While this is not necessarily something that is likely to be of direct use to the majority of app developers (except for those that have a desire to develop their own Mandelbrot set viewing apps), there are some techniques that we’ll explore along the way that most certainly will be of relevance to many.

In the previous article we look at the theory and mathematics behind the Mandelbrot set and looked a little bit as to why some areas just outside the set are quite interesting. In this article we’ll look at how to actually render it in Android. The theory that we discussed previously should make it fairly clear that it’s going to involve some fairly intensive floating point calculations, so rendering times may be quite slow. Regular readers of Styling Android may recall a previous series about rendering a colour wheel where we found that we could vastly improve intensive graphics operations by performing them directly on the GPU using Renderscript. And that is precisely the approach that we’re going to use to render the Mandelbrot set.

The main entry point of our Renderscript script is:

#pragma version(1)

#pragma rs java_package_name(com.stylingandroid.mandelbrot)

#include "hsv.rsh"

const double BASE_START_X = -2.0;

const double BASE_END_X = 1.0;

const double BASE_START_Y = -1.2;

const double BASE_END_Y = 1.2;

float width;

float height;

int32_t iterations;

double startX = BASE_START_X;

double startY = BASE_START_Y;

double endX = BASE_END_X;

double endY = BASE_END_Y;

void mandelbrot(

rs_script script,

rs_allocation allocation,

int32_t iterations_value

) {

width = rsAllocationGetDimX(allocation);

height = rsAllocationGetDimY(allocation);

double canvasWidth = (BASE_END_X - BASE_START_X);

double canvasHeight = (BASE_END_Y - BASE_START_Y);

double canvasRatio = canvasWidth / canvasHeight;

double imageRatio = width / height;

if (canvasRatio > imageRatio) {

double scaleFactor = canvasRatio / imageRatio;

startX = BASE_START_X;

endX = BASE_END_X;

startY = BASE_START_Y * scaleFactor;

endY = startY + (canvasHeight * scaleFactor);

} else {

double scaleFactor = imageRatio / canvasRatio;

startX = BASE_START_X * scaleFactor;

endX = startX + (canvasWidth * scaleFactor);

startY = BASE_START_Y ;

endY = BASE_END_Y;

}

iterations = iterations_value;

rsForEach(script, allocation, allocation);

}

static uchar4 getColour(int32_t value) {

float3 hsv;

if (value < iterations) {

hsv.x = 360.0 * (double)value / (double)iterations;

hsv.y = 1.0;

hsv.z = 1.0;

} else {

hsv.x = 0.0;

hsv.y = 1.0;

hsv.z = 0.0;

}

return rsPackColorTo8888(hsv2Argb(hsv, 255.0));

}

This performs the necessary setup before we can render the individual pixels within the target image. I'm not going to do a line-by-line explanation of this, as this has been covered previously. For anyone completely new to RenderScript, I would recommend reading that article first as our explains what it is any why it performs particularly well when it comes to graphics rendering operations. The important things to look for are the BASE_* constants which define the bounds that we're going to use - anything outside this area is definitely outside the Mandelbrot set, so we only need to plot the ares within this. However, the aspect ratio of this area will not necessarily match that of the display, so we need to make adjustments. The majority of the mandelbrot() function is to calculate the dimensions of the canvas that we are actually going to plot. The logic that we're going to use here is that we'll display the entirety of the area defined by our BASE_* constants, and then add padding. The aspect ratio of the area we're interested in is 3:2.4 and if the display aspect ratio is wider than this, then we'll add padding to either side, but if the aspect ratio of the display is taller than this, then we'll add padding to the top and bottom.

The values startX, endX, startY, and endY are the bounds of the results of these calculations and is the area that we'll actually plot.

This is followed by a utility function that we'll use to calculate colour values shortly. It takes a number of iterations and colours it black if it matches the iterations limit, but if it is less than that it alters the hue depending on how many iterations was used. A low number will produce values towards the red end of the colour spectrum, and as the value increases in will produce values through the rainbow colours, and values close to the iteration limit will be towards the violet end of the colour spectrum. This uses the hssv2argb() function from the include file hsv.rsh which is actually the same as the one used here.

The rsForEach() function will run the kernel (which we'll look at next) for each pixel within the allocation - which is the image buffer that we'll later be able to access from our Kotlin code. The kernel itself looks like this:

uchar4 RS_KERNEL root(uchar4 in, int32_t x, int32_t y) {

double px = startX + ((double)x / width) * (endX - startX);

double py = startY + ((double)y / height) * (endY - startY);

double zr = 0.0;

double zi = 0.0;

double zrSquared = 0.0;

double ziSquared = 0.0;

int32_t n = 0;

while (n <= iterations && (zrSquared + ziSquared) < 4.0) {

zrSquared = zr * zr;

ziSquared = zi * zi;

zi *= zr;

zi += zi + py;

zr = zrSquared - ziSquared + px;

n++;

}

return getColour(n);

}

This applies the function fc (z) = z2 + c recursively until we either hit the iteration limit, or the modulus of the current value of z exceeds 2. zr represents the real component of z, and zi represents the imaginary component.

There are a couple of optimisations at work here which are worthy of note. It is important to optimise this function because it is the workhorse of the entire script. It will be executed separately for each pixel in the image that we are creating, and the while loop may loop until the iteration limit is reached. So for a 1440 x 2880 device, rendering with an iteration limit of 180, the worst case scenario is that the contents of this while loop may be executed around 746.5 million times. So any minor optimisations here could have a major impact on performance.

The first optimisation is that we store a value for the square of x because we use it twice - first in the calculation for the new value of zr, and second within the condition of the while loop to check whether the modulus of z is greater than two. Performing the same calculation twice would slow things down slightly.

The second optimisation is in the modulus calculation itself. This calculation is actually Pythagoras' Theorem because we want to find the distance from the origin, so we're looking for the square root of the sum of the squares of zr and zi to be less than two. Logically we can represent this in C as sqrt(xSquared + ySquared) < 2.0). But we can eliminate the square root calculation if we compare xSquared + ySquared to the square of 2.0. As 2.0 is constant, we can optimise this to xSquared + ySquared < 4.0.

One possible optimisation would be for the calculations of px and py. px will be the same for each distinct value of x, and the same applies to py and y. It would seem logical that we should calculate a mapping of these values in the entry point function, and then simply look them up in the kernel. The size of these mapping arrays need to be allocated dynamically because they are determined by the width and height of the image being generated. Dynamic object sizing in Renderscript is usually done using Allocation objects. I tried this and found that the time to retrieve a value from a specific offset in an Allocation actually took longer than performing the calculation startX + ((double)x / width) * (endX - startX). This is, most likely, because each GPU core is designed to perform calculations quickly and is able to do this faster than an allocation lookup. Irrespective of the cause, it highlights that it is important to benchmark your optimisations to ensure that they actually perform better, otherwise ditch them, as I did in this case!

On final thing worth mentioning is the precision of the points that we'll be calculating. Some may have noticed that I have elected to use double values throughout. While floats may be more performant, I have elected for precision over performance. The rationale here is that a 32-bit float will generally have around 7 decimal digits of precision, and a 64-bit double will be over twice that at 15 decimal digits of precision. Later in the series we'll be looking at how we can zoom in to explore the Mandlebrot set, and the generated images will start to become blocky when we hit the precision limits. So going with double here will allow us to zoom in much farther than if we instead went with float.

We've mapped the theory that we covered in the previous article to code to actually render the Mandelbrot set and optimised along the way. In the next article we'll look at how to incorporate this in to an app to show the Mandelbrot set.

We've only covered a single source code file so far (which is fully reproduced here), so there isn't working app code to publish just yet, but that will follow in the next article.

© 2019, Mark Allison. All rights reserved.

Copyright © 2019 Styling Android. All Rights Reserved.

Information about how to reuse or republish this work may be available at http://blog.stylingandroid.com/license-information.