At the time of writing, March 2021, the initial developer preview of Android 12 has recently arrived. Android 12 will have a design system refresh named Material NEXT, if the many rumours are true. While the details of what this actually means are vague, there are likely to be similarities to other mobile platforms. One UI pattern that common on iOS is to blur the background behind foreground UI components. such as Dialogs. It has long been possible to achieve this on Android by drawing Views to a Bitmap then using RenderScript or OpenGL to blur it. But there are potential performance issues, and it’s not something that will be done consistently across the platform. That looks to be about to change thanks to a new API that has appeared in Android12: RenderEffect

Background

I mentioned, in the introduction, that it is already possible to do what we need to. So what does RenderEffect offer us? The primary thing, in my understanding, is performance. Android uses RenderNodes internally to build hardware-accelerated rendering hierarchies. Essentially, this means that they UI hierarchies get rendered on the GPU. RenderEffect hooks into this mechanism and allows us to apply various effects which will get applied as part of the hardware-accelerated rendering pipeline. This will give us a couple of efficient. Firstly, the GPU will be used to perform the effect processing, so we don’t need to worry about that. Secondly, the processing will only be done when the relevant part of the UI hierarchy is invalidated and needs to be redrawn. The third advantage is that the API is really simple to use. Doing the equivalent in RenderScript would require significantly more effort and code. The final thing worth noting is that whatever processing is required on the CPU (as opposed to the GPU) will most likely take place on the Render thread, and not our Main / UI thread. The only part of the process that could potentially block the Main thread is our RenderEffect creation and applying it to the layout – which are both fairly trivial tasks in terms of processing.

Blur

Let’s start by looking at how we can perform a blur. We first create the blur RenderEffect bu calling a static method:

val blurEffect = RenderEffect.createBlurEffect(x, y, Shader.TileMode.MIRROR)

The first two arguments specify the amount of blur in the horizontal and vertical planes respectively. The larger the value, the stronger the blur effect will be. The third argument specifies how the blur will render near the edges. The TileMode API docs cover the various options for this.

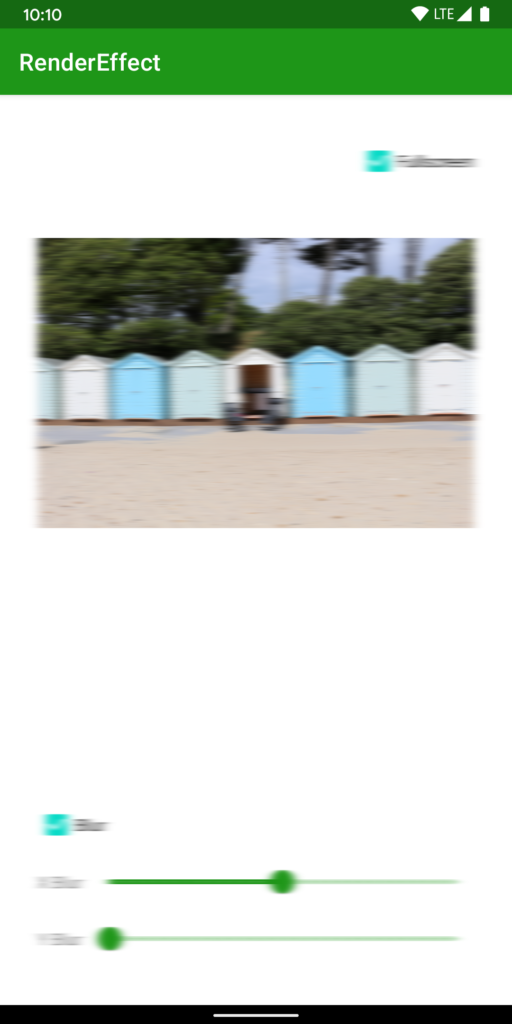

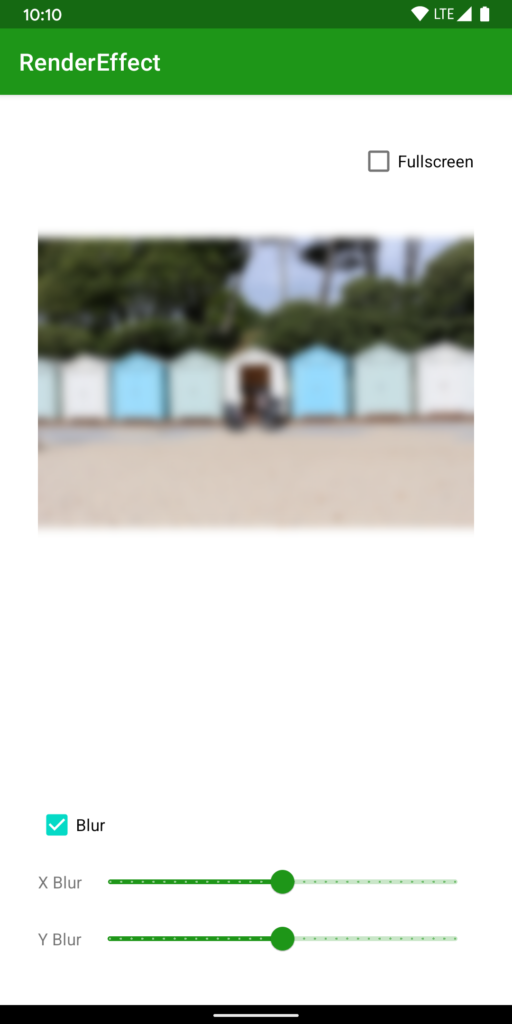

We can create some differing effects my using asymmetric values for the x and y blur components. For example, this image has uniform x and y blur values – each set to 16.

if, however, we use an x blur of 16 and a y blur of 0 we get quite a different effect which is more like a horizontal motion blur.

Once we have created a RenderEffect instance we can apply it to a view:

binding.image.setRenderEffect(blurEffect)

That it. Really. Remember what I mentioned earlier about it being a really simple to use API? All we need to do is create an effect, and call setRenderNode() on any View to apply it.

Hierarchies

The other extremely powerful thing about setRenderNode() is that it operates on entire View hierarchies. If we call this just on the ImageView in the layout, it is only the image that is blurred:

However, we can also instead the same blurEffect instance to the root view of the layout:

binding.root.setRenderEffect(blurEffect)

Doing this affects the root layout and the entire View hierarchy below it. Note: This layout does not include the AppBar. That is a system AppBar, so does not have the blur applied. However, if we included the AppBar within our layout it would also have the blur applied.

In the sample code, the logic for the Checkbox labelled “Fullscreen” contains the logic to toggle the effect between the image only, and the entire layout.

Backwards Compatibility

These APIs only exist in API 31 and later, and it looks unlikely that they will get AndroidX support. I understand that this relies upon some hooks that were added to the low level rendering pipeline. The hooks simply do not exist on early versions of the Android platform, so an AndroidX library simply wouldn’t be able to access the low-level RenderNode APIs necessary for this.

Conclusion

This is an enormously powerful API which is very clean and simple to use. Not only that, it is also highly performant. In running and testing the sample code, I cannot detect any lag whatsoever when toggling the blur.

However there the APIs can become more powerful still, albeit with a small amount of additional complexity. In the next article, we’ll explore this further, along with a different kind of effect that we can achieve with RenderEffect.

The source code for this article is available here.

© 2021, Mark Allison. All rights reserved.

Copyright © 2021 Styling Android. All Rights Reserved.

Information about how to reuse or republish this work may be available at http://blog.stylingandroid.com/license-information.

Great article. This is definitely something that I am going to use in my app. So easy. Thank you.